Academics Need to Wake Up on AI, Part III

Most of us do not contribute to human knowledge—AI just made it obvious

Please like, share, comment, and subscribe. It helps grow the newsletter without a financial contribution on your part. Thank you for reading.

In Part I, I argued that AI can already do social science research better than most professors. In Part II, I engaged with over a thousand responses, conceding where critics were right, while standing by my main claim: the academic status quo was already broken, and AI is just forcing the reckoning.1 In this Part III, written collaboratively with AI and my peers over the last month, I move from diagnosis to what academics can and can't actually do about it.

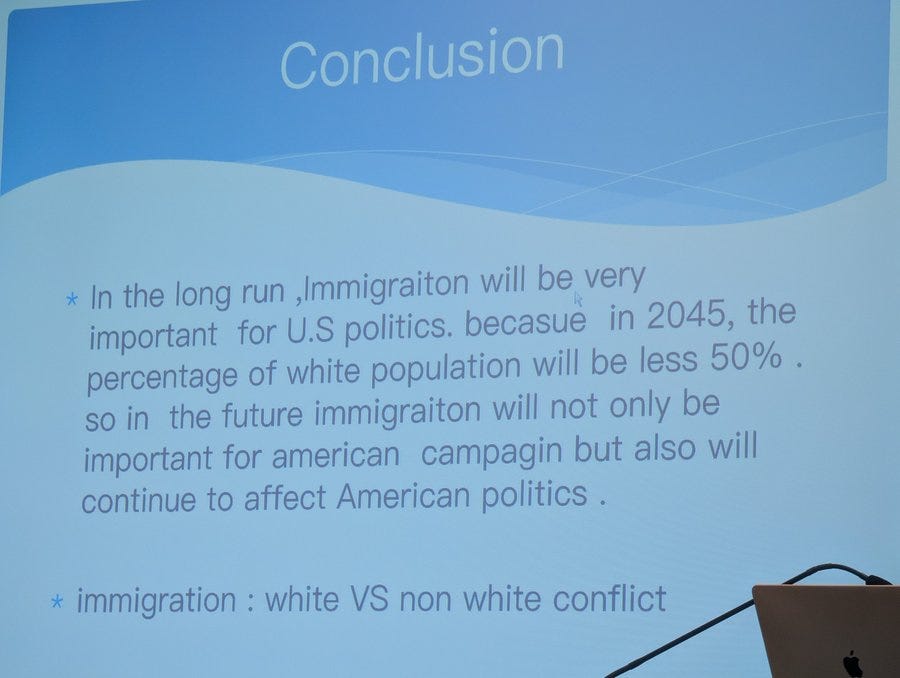

The rather unlikely proximate cause of this third installment on AI was visiting the 2026 International Studies Association (ISA) Annual Convention in Columbus, Ohio—a preeminent multidisciplinary conference of the world’s leading international studies professionals. Or so I was told. What I actually witnessed were presentations so rough they would barely get a C in any of my classes: arguments with no thesis or coherence, grammar errors any spell-checker would catch, presenters reading off their slides as if encountering their own bad arguments for the first time. All without any AI involved, as far as I could tell, judging by the presence of typos and inconsistencies. These were not just grad students, but people with PhDs, tenure, and research budgets.

If AI slop is the crisis everyone warns about, I’d like to know what to call what I saw at ISA or most other big social science conferences, for that matter.2 The contrast was impossible to ignore: I was sitting through these presentations at precisely the moment I was receiving death threats and calls to fire me online for suggesting AI can do research better than most professors. That juxtaposition crystallized the argument for this piece.

21. Most “slop” has always been and still is human slop.

My first thesis was the most provocative thing I’ve said, and I’ve adjusted it only slightly since then: agentic AI can already do most social science research tasks better than most professors globally. I still stand by it. In my recent interview with the Chronicle, they put it more bluntly: “AI Is a Better Researcher Than You.” If you still don’t believe that’s true, let’s talk in a few years.

But the flip side is just as important. If AI can produce better research output than professors, that’s also an indictment of the output those professors were and are still producing without AI.

“Slop” was Merriam-Webster’s 2025 Word of the Year, defined as low-quality digital content produced by AI. But the ISA conference was a reminder that the vast majority of slop has always been human slop. The academic journal system and big conferences in much of the humanities and social sciences were slop factories long before anyone had a ChatGPT subscription. Yes, I really mean that most research is slop.3

Some of it is also what the philosopher Harry Frankfurt would call “bullshit“: work that is indifferent to whether its claims are true, especially on politically charged topics like immigration, where researchers start with the left-wing conclusion and work backward. But slop is broader than bullshit. It also includes work that makes no claim at all, work that is supposed to have craft value, and simply fails. The researcher who finds a dataset before having a question, then dredges for significant results worth publishing, is producing slop. These researchers existed long before AI. They were just slower.

22. Academics were hallucinating and cheating before AI.

The concerns about hallucination and cheating that people raise about AI in academia describe problems that predate it by decades, if not centuries. A massive new project published in Nature this month (which I was a small part of) tested hundreds of social science papers: only about half of statistically significant claims replicated, with median effect sizes shrinking dramatically. This study confirms what the 2015 Open Science Collaboration study found in psychology alone, where roughly two-thirds of findings failed to replicate, and extends it across fields.

We didn’t call these “hallucinations” before AI. We called them “science.” If you think about it, hallucination and inspiration are actually not as different as they sound. Both involve generating combinations that go beyond the input. We call it hallucination when the result is wrong and a breakthrough when it’s right.

Meanwhile, academics routinely cite papers they haven’t read beyond the abstract.4 At least AI hallucination rates are tracked and improving. Human hallucination rates in academia are not tracked at all. We just call them “contributions to the literature.” And if you’re a peer reviewer, you don’t even have to hallucinate on your own: you just write “please cite me” and move on.

Some research is genuinely excellent. But before we worry about AI-assisted cheating, let’s reckon with the human kind for a moment. Diederik Stapel, Marc Hauser, Francesca Gino, Dan Ariely: the list of high-profile fraud cases keeps growing, and these are just the people who were caught. Aside from outright fabrication, p-hacking, HARKing (hypothesizing after results are known), and selective reporting were for years so common that they barely registered as misconduct. We’ve made real progress in understanding these practices, but they remain widespread enough to shape what gets published. Beyond all this, senior professors have always put their names on papers written primarily by graduate students, and entire books have been assembled by teams of research assistants working under a famous scholar’s name. None of this was considered cheating until AI made the process cheaper and more apparent.

23. The “stochastic parrot” metaphor describes humans better than AI.

One of the most influential slogans in the AI debate5 has always functioned as a thought-terminating cliche. As Cate Hall observed, it is a potent coinage: fun to say, conceptually efficient, and it has permanently colonized many people’s minds despite not being true of today’s models. A genuine linguistic work of art. It is also empirically false: every major frontier model since GPT-4 has been trained on non-textual input, and the original argument’s own logic requires text-only training to work.

Now consider something completely different yet still very familiar: the “In This House We Believe” yard signs. Science Is Real. Love Is Love. No Human Is Illegal, etc. You can believe every line and still have no coherent policy position on any of these issues. What does “no human is illegal” imply for enforcement policy: open borders, amnesty, something else? The sign doesn’t say, because saying would require confronting contradictions. It is a loyalty oath, not an argument.

But the deeper point is that this is exactly what AI gets criticized for: producing shallow, feel-good statements that communicate belonging rather than meaning. Turns out humans have been doing that with or without AI for as long as we’ve had lawns to put signs on.

The yard sign is not an outlier. It is how most people seem to engage with most issues: adopt the position of your group, repeat it, move on. The substance of what you argue matters far more than how you frame it, but framing is easier, which is why it dominates public discourse, from Twitter threads to conference presentations.

I saw this firsthand at ISA, where tenured peers presented work that amounted to the academic equivalent of a yard sign, complete with a few misidentified regressions to give it the veneer of science. The stochastic parrot critique was meant to diminish AI. It ended up being a better description of human intellectual life than anyone intended.

24. Yes, consenting adults can use AI for writing. Policing it doesn’t work.

In Part II, I noted that AI detectors are often not very useful and create more problems than they solve. But the deeper problem is the impulse behind them: the belief that AI involvement is inherently contaminating, regardless of what it produces. Quinn Que makes a fascinating case that the obsession with AI writing detectors is akin to enforcing a “one-drop rule,” the principle from 19th-century American racial classification: any trace of AI involvement contaminates the entire work, regardless of quality or the author’s intent.

I was initially skeptical of the analogy, but it’s quite right. In the view of the anti-AI activist, any word you have not written yourself is a moral pollution. Although using AI for writing is not technically “illegal,” there is basically a one-drop rule governing whether you are a legitimate writer or a fraud, a good person or a bad one. Hence, the condemnations and death threats to people like me who disclose their AI usage for “outsourcing their thinking to the machine.”

As I argued in Part I, much of the opposition to AI is status protection dressed up as principle. Andy Masley takes this further, arguing that the chatbot moral panic may have a source beyond the status project. This source is something closer to superstition (”chatbots are demonic”): the sense that AI-produced text is spiritually tainted, that there is something wrong or even evil about a machine that can write, regardless of what it writes.6

Even Megan McArdle, who recently honorably disclosed her AI use and sparked among journalists the same conversation academics had been having on Bluesky a few months earlier, felt compelled to defend herself by insisting that “AI didn’t touch copy.” I admire her for speaking up. But why should the copy question even be an issue? If the work is good and the process is disclosed, the rest is aesthetic preference dressed up as ethics. Where does impurity start? Google search? Auto-correct? Spell check? Transcriptions?

And even setting all that aside: barring some Dune-style scenario of an AI catastrophe followed by humanity coordinating to ban the technology, widespread AI-assisted non-fiction writing is almost inevitable in equilibrium, given the existing incentives. The flip side worth mentioning is that even if you do manage to write well entirely on your own without “AI pollution,” no one is going to be rewarding you for that purity very soon, either.

25. Not using the latest AI tools in your research and writing is malpractice.

Matthew Yglesias recently argued that large language models are underused in journalism. His point applies to academics, too: the purpose of research is the useful output, not the human-mediated process. The rigor should be in the thinking and the verification, not in whether a human or a machine typed the sentences or pressed enter when running the regressions in R. As Hollis Robbins put it well, professors should probably be testing AI models before breakfast (basically be like scott cunningham).

The most mundane use of AI is catching errors. Consider the New York Times headline on April 3, 2026: “A North American Treaty Organization Without America?” The correct name is, of course, the North Atlantic Treaty Organization. The Times ran a correction the next day. Some speculated the error was caused by AI. We’ll never know, and frankly, it doesn’t matter. Any sufficiently smart LLM workflow would have caught the mistake in seconds. A simple automatic routine for all headlines—”Does this contain any errors? Verify against two separate sources”—would have saved the Times a news cycle of embarrassment.

But fact-checking is the floor, not the ceiling. The more interesting use is doing things that weren’t possible before. My now co-author, Kelsey Piper, recently had Codex build her an interactive website to help her actually understand a political science paper she was reviewing, then did the task herself the way the study’s participants had. AI doesn’t just compress the time it takes to produce an output. It lowers the cost of the kind of active engagement most researchers skip: building the thing yourself, stress-testing an argument, rerunning an analysis the way participants did. That’s what deeper understanding actually requires.

The same applies to academia. Half the ISA presentations I attended in Columbus could have been meaningfully improved by a cursory loop through ChatGPT: checking grammar, tightening arguments, catching logical gaps. These tools are free or nearly free. Choosing not to use them is a choice to deliver worse work than you’re capable of, especially if your calling is to inform the public. But journalists and academics alike should not use AI just to catch typos in headlines. They should use it to build interactive visualizations, stress-test arguments, and genuinely understand the complicated things they are writing about.

But what about writing itself? You may have seen a common response to anyone using AI in their writing: “Why should I read the article and not the prompt that caused it?” Sounds reasonable, right? Well, no, if you think about it a bit.

Why eat the meal instead of reading the grocery list? Why look at a chart instead of the ggplot script? Why read the book instead of the author’s notes? The prompt gives you no new knowledge. The output does. That’s the whole point. As I argued in Part II, different people with different expertise, data, and context (as manifested in their claude.md files) produce entirely different outputs from the same prompt. It’s a skill issue.

I get it: it can feel strange to spend more time reading something than the author spent producing it. But we’ve been here before. A chart that took seconds to generate in R can take minutes to read carefully. Nobody demands or fantasizes about seeing the code only instead of the chart. They evaluate the chart and what it says—the new knowledge not available before.

26. LLMs may indeed produce new knowledge.

As recently documented by John Burn-Murdoch, AI chatbots consistently pulled users toward expert consensus, the opposite of what social media does. The tools don’t just help you write better. Used actively, they help you think more carefully.7 This way, LLMs may even reverse the rise of populism. But fact-checking is not the same thing as producing new knowledge.

Before we ask whether LLMs can produce genuinely new ideas (there are good examples of that), we should ask how many humans do. Most academics spend their careers recombining existing ideas in minor variations, applying the same methods to slightly different datasets, producing incremental work that nobody outside their subdiscipline will ever read. Original thinking is extraordinarily difficult and rare in any generation. I don’t mean it as an insult to any of my colleagues (or myself). But the bar that LLMs need to clear is lower than we like to admit.8

My friend Robert Kubinec, a brilliant political methodologist and published fiction author9 at the University of South Carolina, seems to be pretty skeptical about much of the AI hype. He argues that “LLMs never create knowledge, [which] only exists in human brains. They can only compare one set of knowledge to another.” I respect Bob, so it’s OK if we disagree. My response: The philosophical question about self-awareness is real, but it’s orthogonal to the practical one. Whether or not the model “understands” anything, the output either contains new information useful to humans or it doesn’t.10

The most suggestive recent example is Claude Mythos, Anthropic’s new frontier model. In a few weeks of testing, it flagged thousands of previously unknown security vulnerabilities across major operating systems and browsers, including one that had gone undetected for 27 years. Whatever you want to call that, “compare one set of knowledge to another” does not quite cover it.

The best conceptual theories in social science are already recombinations of existing ideas. Anthony Downs built his rational choice theory by transplanting microeconomic utility maximization into voting and literally called his book An Economic Theory of Democracy. Baumgartner and Jones borrowed punctuated equilibrium from evolutionary biology and applied it to policymaking. Social network analysis imported graph theory wholesale from mathematics. Axelrod fused the prisoner’s dilemma with evolutionary fitness selection. Alexander Wendt went further and applied quantum theory to international relations in Quantum Mind and Social Science—a move many found (rightly) ridiculous, but which Cambridge University Press still published. Social science rarely invents from scratch. It translates across domains, and the translation is the theoretical contribution.

That is structurally identical to what LLMs do: recombine patterns and concepts across contexts. Sometimes the result is nonsense. Sometimes it is productive. The same is true of human recombination. Wendt’s quantum IR has been criticized as a mere metaphor masquerading as physics, but as mentioned above, Cambridge University Press published it. If that counts as knowledge production, it is hard to see why LLM-generated recombinations wouldn’t.

To his credit, Bob conceded the practical point even while holding the philosophical one: “the question is how to use this capability to advance knowledge.” Exactly. As I said in Part I, stop debating whether LLMs “truly understand” while the people with the most at stake are already using the tools to improve their work.

27. For critics, the mental model of an AI user is stuck in 2023, which is ages ago.

Remember how we got into this mess. Students got enthusiastic about using AI before their professors, using the very first public, free models like ChatGPT 3.5. The result is that many professors and intellectuals still picture the typical AI user as an undergraduate trying to cheat on an essay or their assistant failing by submitting AI slop. That may still describe a lot of casual AI use around the world. It does not describe how top researchers in most fields are working with these tools, or how anyone trying to use AI responsibly and mindfully actually operates.

Stefan Schubert put his finger on this the best: we underrate other people’s rationality, assuming they apply new tools much more mindlessly than they really do. There is a vast space between asking ChatGPT to write a whole paper and writing down every single word yourself. When you write “yourself,” you are already using shortcuts. You are not doing deep research on every reference you make. You Google a statistic and trust the headline number. You skim an abstract and cite the paper. If you have resources, you outsource verification to a research assistant or a fact checker. AI lets you do much of this in a more systematic, automated, and cheaper way.

Like many, Derek Thompson rightfully believes that writing is thinking and that outsourcing the full writing process to AI leaves your mind empty. But, while much writing is thinking, thinking is not only writing. Making art is thinking. Talking is thinking, too. As Dina Pisareva argues, prompting is also thinking, if you have a good Socratic partner on the other side of the exchange.

Thompson also acknowledges that all writing has always involved reaching outside the writer’s mind for ideas, facts, editing, and fact-checking. The line between legitimate assistance and illegitimate outsourcing has always been blurry, and AI didn’t create that blur. It just made it impossible to ignore. Thompson’s own conclusion: “We should be honest and open about the blur rather than declare everybody with an open Claude window a part of the slopclass.”

Where does that leave disclosure? Many good folks and top academic journals suggest complete AI-use disclosure as a solution. But disclosure is not sustainable in a game-theoretic sense: disclosers bear reputational costs while secret users free-ride, so the equilibrium pushes toward non-disclosure. People like Megan McArdle and I are all living proof of this problem. And the better framing, as Ryan Briggs puts it, is that AI complements expertise: automated RAs checking your work, formalizing arguments as you go, gathering data on demand. It’s a multiplier that lets capable people think better and faster.

My sense is that the only case where disclosure is genuinely owed is when the audience has a reasonable expectation of fully human-produced work, and that expectation is part of what they’re paying for. The best analogy is a live concert in the narrow case of personal or creative writing: if you show up expecting a live performance and the artist is lip-syncing, that’s a legitimate grievance. More precisely, disclosure is owed when non-disclosure would mislead the audience about what they are getting. A journalist publishing under a byline is promising accountability and originality, not keystroke provenance. A memoirist is promising both. The test is what the author is putting their name on, not whether a tool was involved.

But science and journalism are not live shows. They’re about discovering and sharing new knowledge. Nobody discloses that they used spell-check, research assistants, or a Google search. The norms for creative writing and art will be different, rightly so, because audiences there are partly paying for the human experience of making the thing. Humanities will probably land somewhere in between through some lengthy and contentious process, with many friendships being broken. But for research and journalism, “disclose everything” doesn’t get us where we need to go. What readers should care about is accuracy and whether the author takes full responsibility for what’s on the page. Provenance is a much weaker signal than either of them.

28. Nobody knows anything, myself included. That’s OK—we’ll figure it out together.

I initially shied away from talking to journalists about AI because I’m not an expert on it in any meaningful sense. But I’m increasingly realizing that nobody is an expert on whatever this all is, not really, and waiting for one to appear is how you end up with no norms at all. I still shy away from giving any advice beyond installing Claude Code (or your agentic tool of choice) and talking to it to solve a problem you have at hand.

As usual, Arthur Spirling was blunt on this point: the AI-academic accounts that constantly offer “advice” to colleagues and PhD students, as if they were in the labs developing the models, are tedious. They’re spectators, like the rest of us.11 Even the people in the labs have no idea how good the models will get beyond a few months.

So we’re in an awkward position: somebody has to start establishing the norms of the new workflow environment, and the people doing it will inevitably be people who don’t fully understand what they’re building norms for. That’s weird and uncomfortable. It’s also how most other institutional transitions have probably worked, though at a much slower pace.

We went through a version of this that seems like ages ago, when professors had to accept that policing students’ AI use fully and meticulously was neither productive nor possible. The same is now true for researchers. You can audit the parts that actually matter, like the replication code, and LLMs already make it easier. Provenance is the one thing you can’t audit, and it’s the thing we shouldn’t be spending energy on anyway. You have to follow the incentives and build systems that ensure the tools are used well, not pretend they aren’t being used at all.

On a related note regarding teaching, I’ve been on leave, so I’ve shied away from advice here too. Given the many questions I’ve received, I’m more pessimistic about the effects of AI on teaching than on research. For instance, while I use AI openly and extensively in my research and writing, I plan to ban all electronic devices in my substantive classes and bring back in-person written and oral exams.

These are not contradictory positions. The classroom is precisely where students need to build the cognitive skills that make AI collaboration productive later. You cannot meaningfully direct an AI tool if you never learned to think without one. The skill-atrophy concern from Part II is most valid here: students need to internalize the fundamentals before they outsource them. Research is where you deploy the best tools at your disposal to produce a worthy outcome. Teaching is where you make sure the next generation can actually use those tools well.12

29. The best work happens when humans and AI collaborate.

I still see many folks pledging not to use AI in their writing. This is about as sensible as pledging not to accept help from research assistants or co-authors. So, I pledge the opposite: I will use the latest LLMs, and for that matter any other available tool or human co-author, to best improve my research or the way I communicate it. That way, if my name is on it, you can be sure it reflects my own best judgment.

Many academics, especially in the humanities, still seem to believe that it matters how much time you spend on doing your work. But the folk labor theory of value is wrong in economics, and it is wrong here as well. If you’re a weirdo who wants to spend years running all your robustness checks manually with matrices inverted by hand instead of running a few R commands or manually translating or transcribing original manuscripts, that’s on you. I didn’t care much if those international studies professors at ISA spent 10 or 100 hours on their human slop presentations. The work is either good or it isn’t.

That said, there are some norms worth establishing. When you write “I believe” or “I feel,” that first person should genuinely be you. The pronoun “I” carries an implicit promise of a human voice behind it. A factual claim, like the correct name of NATO, doesn’t care who typed it. But personal conviction does. Think of a live concert: the audience pays for a real performance, not a lip-sync. When you use “I,” the reader is entitled to expect that you mean it. That doesn’t require literally typing (or dictating) every word yourself. You can direct the model, work from your notes, and read it over carefully, but it does require that the conviction is yours.

This piece, for instance, was openly and proudly written using an iterative back-and-forth conversation with Claude Code, based on my original ideas, conversations with peers, and useful suggestions by human academic and non-academic colleagues.13 Part I experimented with AI capabilities with no human editing. Part II reflected with 100% human voice. Part III, which you’re reading now, combines my voice with AI capabilities, completing the circle. I’ll let you decide which one was best.

So, where do humans retain an edge? As Yiqing Xu argues, probably in open-ended environments where training data doesn’t yet exist and in tasks that require direct human interaction. This may change soon. But we chose this career because we find meaning in figuring things out, not just because we’re still better at it. That’s why I don’t see any contradiction in outsourcing most grunt work to AI (or RAs, if you hold traditional values) and focusing on what gives us the most meaning—coming up with important questions about the world and answering them with the best tools available.

30. The real risk in science is human slop at AI speed. We can still prevent it.

AI amplifies whatever you bring to it. Bring genuine curiosity and hard questions, and AI will help you produce something worth reading. Bring nothing, and you’ll produce nothing faster.

But there’s reason for optimism. The same Nature findings that revealed how broken reproducibility is also showed that journals with mandatory data and code sharing had meaningfully higher reproducibility rates. AI can already accelerate this: nothing but inertia precludes journal editors from instituting automated reproducibility checks and mandatory code verification as a condition of submission. These systems can be set up as quasi-deterministic, with very little room for error, and authors can always challenge an automatic desk rejection if they believe the in-house agentic workflow failed to determine how to reproduce their code.

More ambitiously, AI lowers the cost of doing the kind of large-scale, data-intensive work that used to require being Raj Chetty with a team of 30 research assistants and access to every administrative dataset in the country, or being Daron Acemoglu… with pencil and paper. A junior scholar with Codex can now attempt projects that would have been logistically impossible five years ago. The bar for what gets published should rise, because the bar for what can be produced already has.

The standard hasn’t changed: if you put your name on something, you stand by it. Judge the quality of the output, not the process. The conversation about AI slop is a distraction from the harder question, one we should have been asking long before the chatbots arrived: why have we been tolerating so much human slop in the first place?

***

My goal when I posted Part I was simple: to bring the conversation that was already happening behind closed doors and in DMs into the open. Still, I keep hearing the same thing from my colleagues: “Alex, we know you use AI, we do it too, but can’t you just be quiet about it?”

I understand the fear that AI will be used irresponsibly, producing more slop than it prevents. But if you think about it, “be quiet about it” is just advocating for universal hypocrisy as a professional norm. Part of what I wanted was for the uncertainty everyone is experiencing about their workflows and their futures to reach a more stable equilibrium. That requires honest conversation, not silence.

And it is already happening. Emily Oster recently shared Isaiah Andrews’ advice on AI for MIT PhD students in economics, calling it something that should be circulated to all PhD cohorts. As Andy Hall noted, the most important thing about it was not any particular advice per se but having high-profile faculty send a clear signal: this is something you need to take seriously. Even Dylan Matthews, one of the most thoughtful people in journalism, recently admitted the AI people have been right a lot.

The change is real. Academics are already half-awake, and they are not going back. Aspiring grad students and junior scholars should tread carefully but embrace learning about and using AI tools fully. The scholars, intellectuals, and writers who refuse to engage will not be rewarded for their purity. They will simply be outperformed by colleagues who bring the same intellectual rigor, the same curiosity, and better tools.

I should acknowledge that, together, those two posts became the most widely read thing I have ever written (with or without Claude). People at immigration or any other conferences, not to mention university administrators across the country, now ask me for AI advice instead of immigration or political science takes. This says something about how seriously we take things in this field, and also that I should start charging more for my talks and consulting.

I don't mean to single out ISA unfairly. The organizers were working under real constraints, there were plenty of great panels and serious scholars at the conference, and I have written elsewhere about concrete ways to make academic conferences better.

That's probably the assertion that received the most pushback from early readers of this piece. Some argue that my use of "slop" stretches the concept too far, that AI slop (over-produced, polished but empty) and bad academic work (careless, under-produced) are different failure modes. I normally abhor conceptual stretching in scientific writing, but I genuinely don't think that's what's happening here. What I saw at ISA was over-produced, meaningless work that looked scientific but added nothing to human knowledge. That is "slop" by any definition.

I can attest that many citations of my own work have been creative reinterpretations of what I actually found: findings simplified, conclusions reversed, indicating almost certainly that those who cited me haven't read my work.

“Stochastic parrot” comes from a 2021 paper co-authored by the linguist Emily Bender, arguing that large language models produce text by predicting probable word sequences without understanding.

Dean W. Ball goes even further and argues that much of the left’s AI denial rests on a worldview where the tech industry is composed of “vapid morons” whose accomplishments are always superficial, always based on some grand theft. This heuristic may have worked for crypto. It does not work for tools that millions of researchers and writers are quietly using to do better work.

I say “used actively” because there is real evidence that passive, uncritical reliance on AI can degrade critical thinking skills, especially for routine tasks. That’s a real concern, and it’s why I argue below that the classroom is where students need to build the foundations before they outsource them.

As Hollis Robbins argued a year ago, the only academics who will retain value in the AI era are those working at the frontiers of knowledge. The more time passes, the less outrageous that argument seems.

Y’all should check out the Bayesian Hitman. It will rock your priors for sure.

As Alison Gopnik and colleagues recently argued in Science, LLMs are best understood as cultural technologies, like writing, print, and libraries, or tools that enable new forms of knowledge production even if they don’t “think” themselves.

The exception that prove the rule are Aniket Panjwani and Scott Cunningham, who have spent months publishing detailed first-hand accounts of what it actually looks like to do research with Claude Code. At least to me that kind of write-up was genuinely useful and instrumental to getting my AI series out.

There is a fair worry lurking here: grad students trying to learn the fundamentals are now competing for journal space with professors who have legions of AI agents. That's real, and it's why the teaching answer isn't "ban AI forever." The answer is scaffolding—learn to think without the tool first, then use it with judgment.

I'm particularly grateful to Steven Adler, Ryan Briggs, Tina Marsh Dalton, Josh Gellers, Jimmy Alfonso Licon, Igor Logvinenko, Ilia Murtazashvili, Kyle Saunders, Dina Pisareva, Quinn Que ❁, Ben Radford, Mike Riggs, Hollis Robbins, Jim Walsh, Sean Westwood, Yiqing Xu, and Emma Zhang for their helpful suggestions and pushback.

Alexander, I understand that using a heavy dose of optimism to shake the academic community is a fair tactical move. But there is a real danger that unchecked optimism might actually do more structural damage to academia than the denialism you are fighting.

I want to point out a few blind spots in this narrative, not to defend the old ways, but to look at the actual nature of the tool we are dealing with.

First, regarding the nature of the models. We don't need to rehash the "stochastic parrot" debate, but we have to stay grounded in how the current architecture actually works. Even with the recent introduction of reasoning steps, these systems remain statistical approximations at their core. They haven't developed a mechanism to genuinely understand their own errors; they are still running loops within their probabilistic weights. They provide a very high-quality simulation of reasoning, but they lack the metacognition to know when they are wrong. Doing heavy AI-assisted R&D I see such issues every single day.

This brings up the core problem: cognitive hacking. AI generates fluent, confident text and our brains naturally associate with expertise. It requires an enormous, unnatural amount of willpower to force yourself to rigorously validate something that already looks perfect. It works against our dopamine system, making us intellectually lazy without us even realizing it.

And this is where the specific vulnerability of your field comes in. In mathematics there is a strict apparatus for validation. But in social sciences, the validity of a hypothesis is often tied to how coherently it is argued and framed. In these fields, an imitation generator completely breaks the system. When a generated text looks highly expert and logically linked, verifying the actual truth of the claims becomes incredibly difficult and energy-consuming. The AI exploits the exact metric we usually use to judge quality there.

The goal shouldn't be to just accept the output because it saves time and looks good. The real challenge right now is designing strict, effective workflows that acknowledge these cognitive traps and the fundamental limitations of the tool.

Because of all this, I honestly think the current academic pushback and outright bans might actually be doing more good than harm at this specific moment. I would gladly be an optimistic advocate for progress myself, but there are critical, unresolved structural problems here. We need to figure out how to handle them before we let the current wave of hype drive widespread, uncritical adoption. The goal shouldn't be to just accept the output because it saves time. The real challenge right now is designing strict, effective workflows that acknowledge these cognitive traps and the fundamental limitations of the tool.

Jesus Christ that slide really is embarrassingly bad. These conferences are supposed to have experts working at the frontier of human knowledge, and yet this academic is talking about the sort of thing that the average patron of your local watering hole is already aware of. Does he really think he's the first person in history to stumble across ideas like demographic change and racial conflict!?